All-in on AI: The Biggest Bet of All Time. By: Curt R. Stauffer

At a $5 trillion market capitalization, Nvidia's valuation exceeds the annual GDP of every nation in the world, except for the United States and China. The expected $1.3 trillion in global aggregate capital expenditures for AI infrastructure between 2024 and 2029 is larger than the inflation-adjusted cost of the space race of the 1960s, and it appears to dwarf the infrastructure spending on networking and data storage during the Dot-com bubble period of 1996-2000.

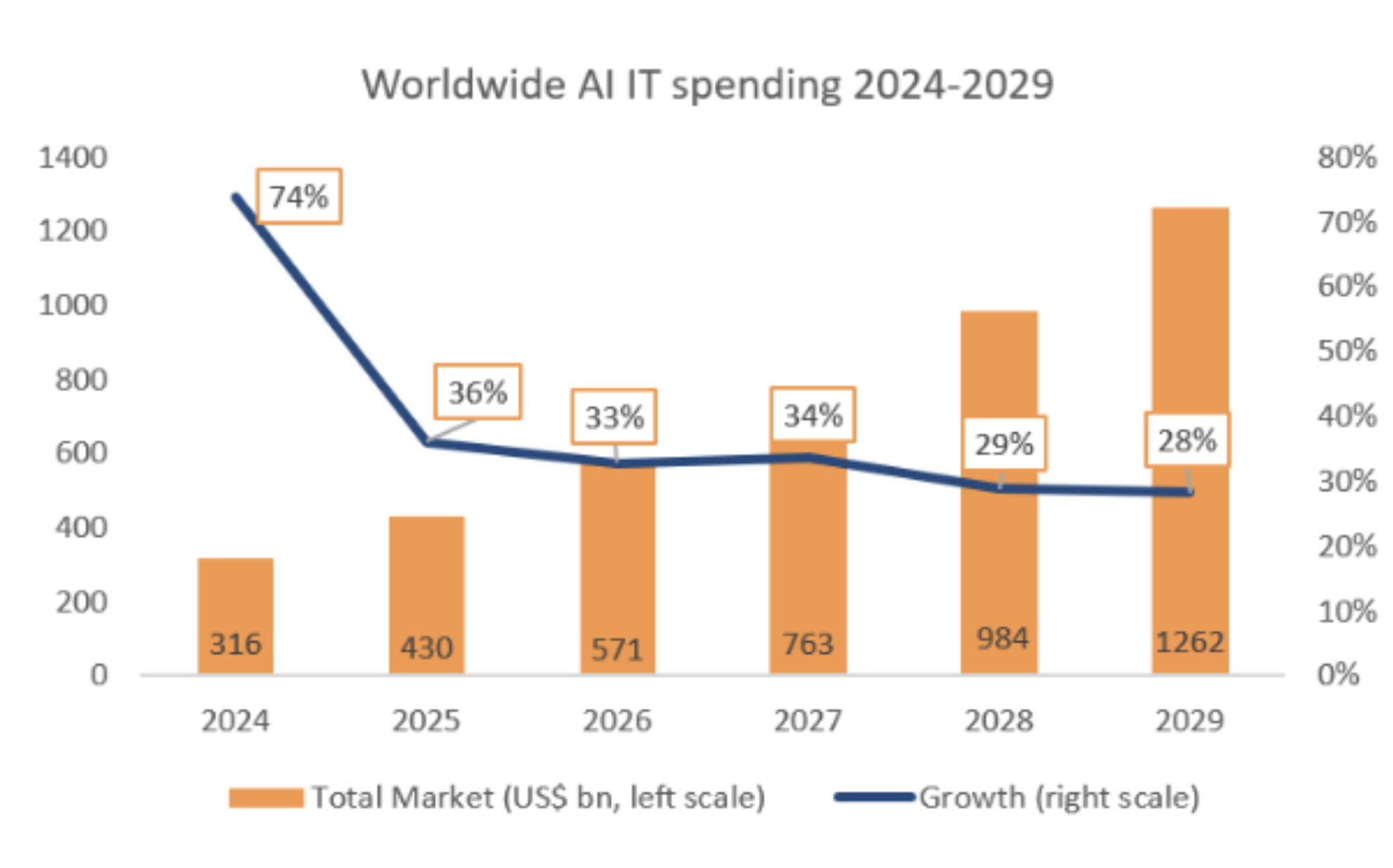

Here is a forecast published recently by Syz Bank on the expected AI CAPEX spending through 2029:

On January 7, 2000, just before the internet infrastructure spending-induced technology stock bubble burst, Time magazine published an article titled "Do You Know Cisco?" Cisco Systems is the closest Dot.com era analog to Nvidia today. Cisco was the market leader in selling network routers and switches that enabled our telecommunications grid and corporate networks to transport Internet Protocol (IP) data over telecommunications lines and corporate networks, facilitating nationwide internet connectivity. The Time article indicated that Cisco "built dominant market share in a crucial high-technology industry-controlling 50% of the $21 billion business-network market." There were other critical infrastructures, such as data storage, internet servers, and network-capable PCs. Thus, if the business-network infrastructure market was a $21 billion market in 1999, presumably the peak spending year of that cycle, and we assume that business-network equipment represented 50% of the total internet infrastructure market in 1999, that would equate to a peak year total CAPEX spending of $42 billion. I will assume for illustrative purposes that such expenditures totaled $12 billion, $22 billion, and $32 billion in 1996, 1997, and 1998, respectively. Using this assumption, the "ballpark" total internet-related CAPEX spending for the four years from 1996 to 1999 is estimated at $108 billion. Using the same BLS CPI inflation calculator, these four years of spending in today's dollars would equate to $213 billion. Trying to extrapolate these spending estimates to the rest of the world, if the rest of the world in aggregate spent the same as the U.S. on internet-related CAPEX in the late 1990s, on an inflation-adjusted basis, that exuberant period of internet-related technology spending would have been approximately $426 billion.

Whether we look at the space race of the 1960s or the heyday of internet technology spending in the late 1990s, these two generational CAPEX spending periods each equated to an inflation-adjusted level of the expenditure that approximated $425 billion, or less than one-third of the expected $1.3 trillion spending that is forecast for AI infrastructure spending by 2030.

I do not question that AI will be a transformational technology for governments, businesses, science, and individuals. The biggest questions are, what will be the business model for companies like OpenAI that will deliver a sufficient return on investment to justify the massive spending that this company is doing to maintain its leadership position in developing AI and how will a company like Alphabet (Google) develop and monetize AI without significantly displacing Google's most profitable business, which is Search using an advertising business model? However, the stock market and investors are not waiting around for potential answers to these questions.

Just this month, questions have begun to surface publicly around the sustainability of the current pace and scale of spending on AI infrastructure. In a November 7, 2025, Forbes article titled Why Sam Altman Won't Be On The Hook For OpenAI's Massive Spending Spree, the authors wrote, "in a long-winded post on X, Altman addressed the question of what happens to OpenAI if its web of deals falls apart:

Altman: "If we screw up and can't fix it, we should fail, and other companies will continue doing good work and servicing customers," Altman said. "...We of course could be wrong, and the market-not the government-will deal with it if we are."

The Forbes authors went further and discussed just how staggering the growth at OpenAI will need to be to meet its ambitious commitments. They wrote: "In order to come through on its compute commitments, OpenAI's revenue would have to grow to an estimated $577 billion by 2029, roughly the size of Google's revenue that same year, Tomasz Tunguz, a general partner at Theory Ventures, wrote in a recent blog post. That's a roughly 2900% jump from its current projections for 2025."

"But OpenAI has options. One likely scenario is that the AI company pays for and utilizes only a portion of the compute it has booked, said D.A. Davidson analyst Gil Luria. In that case, companies like Oracle, Amazon, Microsoft, CoreWeave, and others will most likely renegotiate the contracts and ensure they get at least some amount of business from OpenAI, especially if the alternative is getting none at all. "They don't want OpenAI to go bankrupt, so their incentive is to renegotiate," he told Forbes."

The Register published an article on October 29, 2025, titled Microsoft just revealed that OpenAI lost more than $11.5B last quarter. This article examined what could be inferred from Microsoft's latest quarterly earnings report, considering that Microsoft owns more than 25% of private OpenAI. The author wrote: "Microsoft reported earnings for the quarter ended Sept. 30 on Wednesday after market close and buried in its financial filings were a couple of passages suggesting that OpenAI suffered a net loss of $11.5 billion or more during the quarter."

No two companies epitomize the "AI revolution" more than privately held OpenAI and the world's largest company, Nvidia (NVDA). Nvidia is unquestionably a financial success, but OpenAI is a very large "open" question. However, Nvidia's valuation and continued success are dependent on OpenAI's ability to continue facilitating, if not outright building, significantly more compute capacity. And OpenAI's ability to drive these significant increases in compute is dependent upon the willingness of companies like Amazon (AMZN), Microsoft (MSFT), and Oracle (ORCL) to commit billions, if not trillions of dollars in aggregate, to build out data centers across the United States in the coming years. But willingness and financial resources might not be enough. Turning willingness and hundreds of billions of cash into actual operating data centers requires far more than money and effort.

I am not surprised that investors extrapolate year after year of uninterrupted growth into their current enthusiasm for the stocks of pure-play AI companies and other companies that are heavily leaning into the AI build-out narrative. This is simply the way investor psychology has always manifested itself in market prices.

As someone who was on the front lines as an equity analyst and portfolio manager during the last big technology revolution stock market mania, I am on high alert for those "speedbumps" that could reset expectations lower and flush out the valuation premiums that result from being priced for perfection, and that is what should concern cautious investors.

For the eventual monetization of the AI build-out to unfold as perfectly as today's narrative presumes, all the following barriers will need to be mitigated over the next several years.

Electricity Capacity:

Liquid Cooling Systems:

Networking & Optical Components:

Data centers are extremely complex facilities that require far more technology than just racks of servers running on Nvidia GPU chips. These facilities require the most advanced "plumbing" for both incoming and outgoing data. The data streams in, out, and within these data centers require both fiber optics cables and optical switching. This equipment, similar to the cooling system, is capacity-constrained.

Labor Shortages:

Rare Earth Material:

According to a commentary written by Gracelin Baskaran, Director of the Critical Minerals Security Program, published by the Center for Strategic &International Studies on January 8, 2024, 60% of current rare earth materials come from China, and nearly 90% of refined rare earth materials sold around the world come from China. The supply of these critical elements for use in semiconductor and other essential components of technology is fraught with disruption risk.

Advanced Lithography EUV Machines:

Solely Manufactured by ASML: Dutch company, ASML is the sole global manufacturer of the lithography equipment used by semiconductor fabricators like Taiwan Semiconductor and Intel to make today's most advanced chips, including Nvidia's latest GPU chips that dominate the market for AI data centers. These ASML machines cost between $200 million and $300 million each, and ASML has orders spanning two to three years. ASML has reported that, based on current orders, it expects to deliver 56 of its Low-NA EUV machines and only 10 of its High-NA EUV machines in 2027. ASML's monopoly, which it has maintained for the past decade, is not expected to be challenged in the foreseeable future.

I have written about ASML several times over the last few years, and its monopoly is a compelling investment opportunity at the right price. Still, at the same time, this monopoly has the entire AI revolution entirely dependent on this one company's ability to continue innovating and increasing its capacity to deliver highly complex machines manufactured using a supply chain of very specialized producers of lasers and mirrors.

Compute power is a function of chip wafer size because the more chips that can be stacked in a device or server, the more compute power can be derived. Rubin will still be manufacturable using ASML's Low-NA EUV machines. However, Rubin Successor, expected in 2028, will require ASML's recently introduced High-NA EUV machines that will presumably be in short supply for several more years.

The better you understand what enables the innovation that Nvidia ushered in with its GPU chips used in the field of Artificial Intelligence (AI) and the ancillary, but critical infrastructure necessary to build out sufficient data centers to realize the AI promises being made by Nvidia, Microsoft, Oracle, Amazon, Google, and OpenAi, the more appreciation you gain of all of the things that must go exactly right for the market's great enthusiasm to be born out.

Bubble Talk

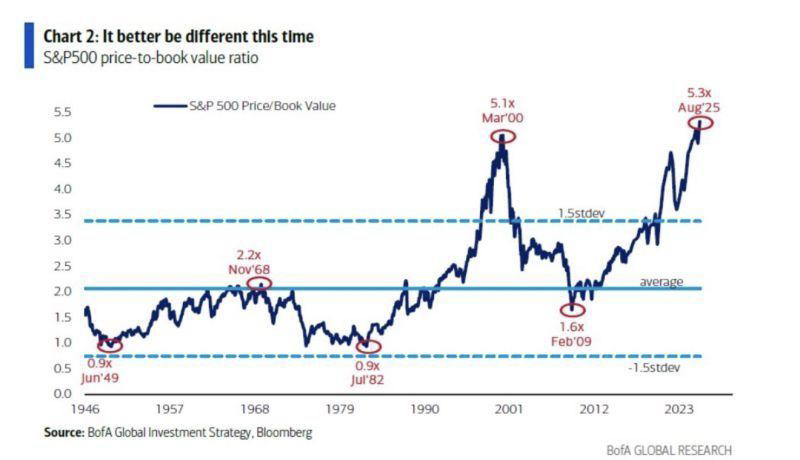

Jonathan Baird, Editor and Publisher of the Global Investment Letter, wrote an article on November 9, 2025, titled "It Better Be Different This Time: Valuations Back to Dot-Com High."

Baird wrote: "The S&P 500's price-to-book ratio has reached 5.3x, its highest level since the peak of the dot-com bubble in 2000. For context, that ratio averaged around 2.9x over the past 80 years and has touched these extremes only twice before: March 2000 (5.1x) and today."

"History rarely repeats precisely, but valuation extremes tend to rhyme. In 1968 and 1999, investors also believed structural change justified permanently higher multiples. The "Nifty Fifty" were said to be one-decision stocks; the Internet boom was expected to rewrite the rules of profitability. In both cases, genuine innovation collided with the limits of market psychology."

He included in that article the following graph: There have been numerous such graphs circulating over the last several months that display various indicators reminiscent of the year 1999 or 2000. I included this one only because it accompanied Jonathan Baird's letter, not because I believe that Price-to-Book is a better valuation measure than others. However, I do agree with Baird that these types of comparisons should not be ignored, but instead serve as a reminder that investor psychology surrounding the AI technology revolution is leading to many valuation measures bumping up against or exceeding those from past innovation-led bull markets in stocks.

There have been numerous such graphs circulating over the last several months that display various indicators reminiscent of the year 1999 or 2000. I included this one only because it accompanied Jonathan Baird's letter, not because I believe that Price-to-Book is a better valuation measure than others. However, I do agree with Baird that these types of comparisons should not be ignored, but instead serve as a reminder that investor psychology surrounding the AI technology revolution is leading to many valuation measures bumping up against or exceeding those from past innovation-led bull markets in stocks.

As an investment manager, I firmly believe that blindly chasing market returns by following the herd, more often than not in the past, has led to a painful period of corrective price action. I want more than anything to deliver market-beating returns at any given time, and I will attempt to do so, but I will not do so by blindly following the herd. In innovation-led, exuberant markets, I will place limits on exposure to the highest momentum areas of the market and look for underappreciated beneficiaries of innovation-driven secular growth areas of the economy, as well as early leaders in what may be a second derivative area of innovation.

From a technological perspective, over the last two years, our portfolios have added exposure to quantum computing, quantum sensing, data center cooling systems, advanced electronics for data centers, and upgrades necessary for our electric grid, as well as AI robotics software and advanced semiconductor manufacturing equipment (ASML). What we will not do is continue to buy Nvidia (NVDA) and the other so-called hyperscale companies solely because they continue to rise in price and, by their sheer size, drive the market cap-weighted indices higher and higher.

Wall Street and the investment consulting world operate within time increments measured in quarters. This short time horizon tends to lead stock analysts and portfolio managers to chase the currently best-performing area of the market, making momentum a key factor in most quantitatively driven models and trading systems.

Our time horizon is much longer, and most of the time, we view high momentum as a cautionary sign, not a green light to double down.

Our clients value not being part of the herd and maintaining a focus on long-term opportunities, rather than chasing what is deemed "hot" at the moment. We are constantly on the lookout for new clients who share our version of prudence and discipline. If you are one of these people and not currently a client, reach out and have a conversation. If you are a client and know someone who is becoming uncomfortable with the herd mentality, please make a referral so that we can have a meaningful conversation about a more thoughtful approach. Thank you!